Hello guys, long time without posting! To compensate, this is going to be a long post because there is a lot to talk about. Also, this is the first post on the blog!

The Library

Well, our library is basically finished and we are just uploading more and more scans, just filling it with lots of different categories, in the meantime we are working on other cool stuff for you and making preparations for the beta testing, finally.

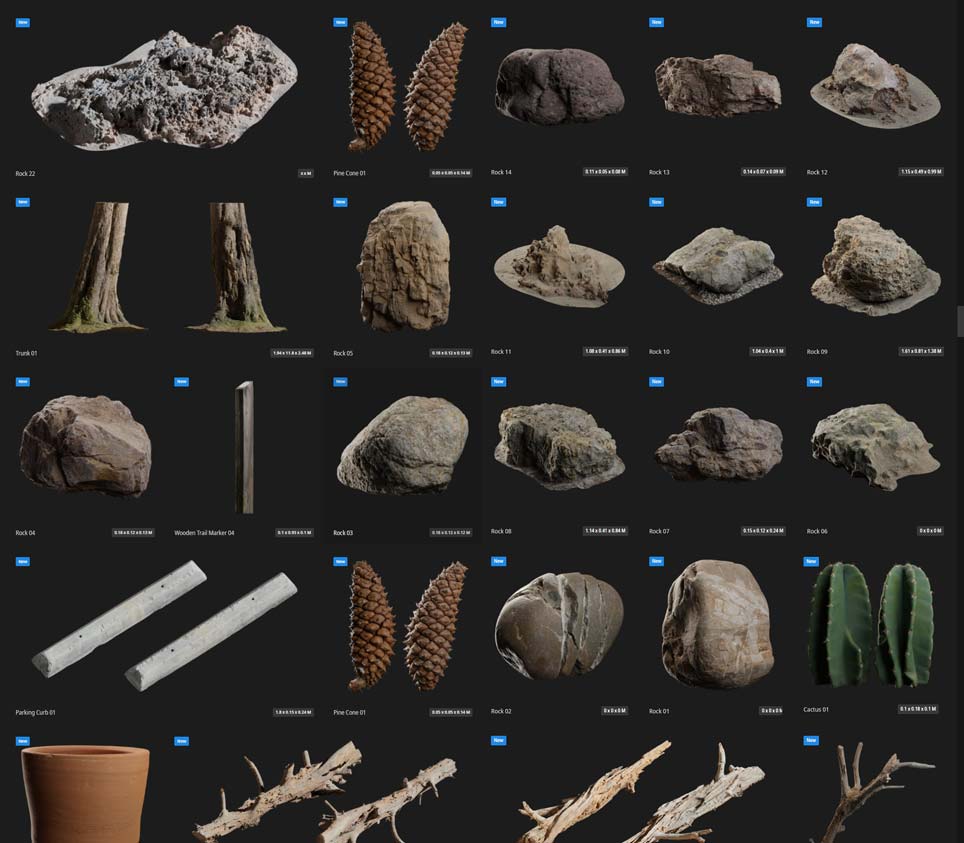

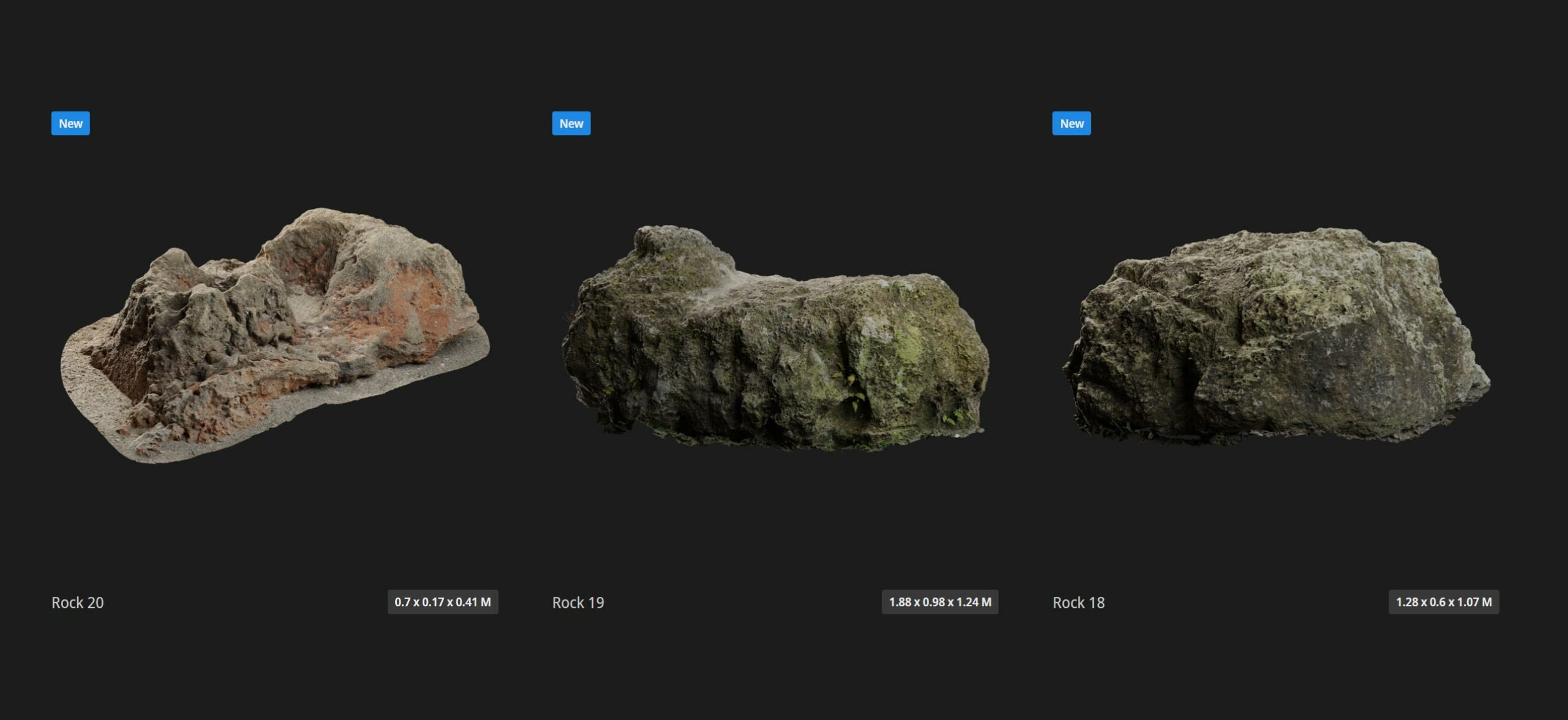

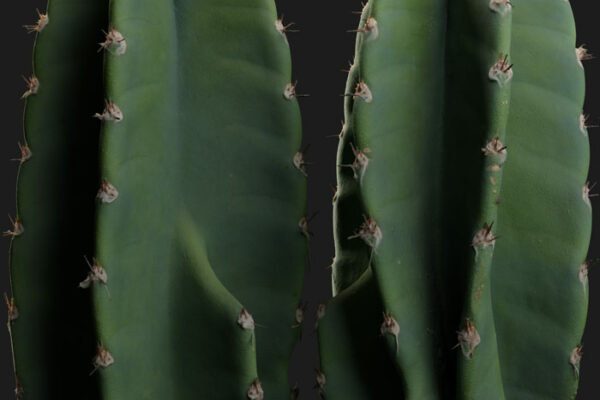

Here’s a rather small sample of our collection:

The Baker Tool

First of all, I want to apologize because I said I was going to post renders of our assets and I did not. Instead I prefered to keep it secret for a bit longer and I focused strongly on training our team better for the creation of our assets so I could personally focus more on the tools we are going to release. We’ve created several programs that improved our pipeline drastically, like fast baking of high polygon counts (2-10 billion) with huge resolution textures like 80-100K using any amount of RAM and supporting both CPU and GPU processing without limitations. This program is called Baker and I will talk more about it in a later stage. It’s one of the programs we will release with the new library. Basically, this program lets you bake any polygon count and at any resolution (literally any, of course it takes more time baking) using CPU or GPU (and yes, without being limited by the amount of VRAM). It bakes color, normal, height, ambient occlusion and any other custom map you need to bake. It was engineered to be fast for high-demanding workflows with strict time frames. It features single and double floating-point precision for processing, which is most notorious in the ambient occlusion and height maps, powered by our render engine Zeta Renderer.

The creation of the library

“But how/where do you store all this data? I mean, there’s no file format that supports these resolutions”.

It’s almost 2022 and we are still using image file format specifications that are already 30 and some almost 40 years old with very silly limitations, which may have made sense back in the days of the old 32-bit architecture machines but for nowadays requirements, well.. I’m surprised we keep using them. Limitations like the 4GB max file size or the limited support for color depth, etc. But, they are just that… specifications, and one chooses whether to follow them or not as a software developer, right? The problem is most of them keep following the same old specifications and if you choose to implement them in a different way well, other programs won’t be able to read these files. For this reason we have implemented our own file format and we will support it across all our program family from now and on. It’s called Friendly Shade Image Format (or FSI for short). It supports any resolution, and up to 64-bit color depth (imagine building worlds with 64-bit vector displacement textures 😍).

“But, the Photoshop file format PSB does support high resolutions, doesn’t it?”

Adobe Photoshop’s PSB is the only one that doesn’t have these limitations but their complex documentation on their file format specification makes it a huge task to implement it. Also it’s not meant for simple images, it stores information about layers, filters and other things relative to Photoshop that seem overkill for simple images, only to be able to store images larger than 32K. It’s their document file format after all, not just an image file format. “But, will Photoshop be able to support your file format?” – We have been working on that for a while but guess what? Photoshop is another one of those programs with really bad API’s (Application Programming Interface, in simple words the part of the code they expose for you to use and code with it). It’s full of bugs and worst of all, it still has the said 32-bit limitation problem, again!! Even the latest, 2021 API/SDK is still limited by 32-bit precision data, which in simple words means it won’t allow us to work with images larger than 32,768×32,768, yikes! So, we have to convince Adobe to update their old 90’s API or.. build our own tool for manipulating images?.. Maybe we are already working on that? Well, that’s a story for another blog post.

This is not the first and probably not the last limitation we have found in a program during our journey through the creation of an asset library. Blender for instance is not able to import or export your models after a certain polygon count. It will just make your whole computer crash. A common problem we see in different programs (not only 3d modeling programs) is they have multiple copies of the same data in RAM, which sometimes is necessary (in quite rare scenarios), but not during a simple task such as exporting a model. Sometimes the same data is copied twice or even three times which makes a 3 million polygon model feel like a 10 million one for your poor computer. For this reason we have been taking out Blender and other programs from our pipeline for certain steps, as our requirements make it impossible to accomplish some of these tasks in these programs. What about importing a texture that is larger than 16K? will it tell you it does not support it? Nope, instead it prefers crashing 😑. And believe me when I say I’m not trying to be the one who just complains about programs just because, or the business owner trying to sell you their product speaking bad of another’s product; that’s not the goal. In fact, it would have been much more convenient for us and for you, artists, if it was easier for us to integrate our tools with other company’s products. Life would be so much easier!

To wrap things up

We are quite far away from “the robots taking over the world”, in fact the programs we use nowadays are full of bugs, limitations and the people behind it just seem to care about producing more and more but.. not better. These programs are, at the end, just a bulk of code that was written in an interpreted language, in a desperate attempt of earning a lot of money in the shortest possible amount of time (and just to be clear, I’m not referring to Photoshop or Blender specifically, just in general, most programs we use in a daily basis for 3d modeling, rendering, texture and material creation, simulations, etc). What if we produced better tools instead of finding ways of creating programs in quicker ways.

Thank you

Cheers, Abdulrahman! ^_^

Exciting news. Can’t wait to see the full potential of the zeta renderer. I remember that anticipation when corona renderer came out, I have the same feeling now again for Zeta.

Good luck, Sebastian.

Oh wow, thank you George! I’m very glad to read that, and I hope it will be useful to you when it comes out!

What about EXR.

Dont think that was limited in pixel resolution and 32 float would be more than enough in bit depth I would think.

Stan

Hey Stanley!

EXR is actually one of the worst formats. It has a file size limit of 4GB in most implementations, it doesn’t support integral types, only floating-point types (in other words, no support for 16-bit or 8-bit integers). It’s limited to three channels, so if you need to store a grayscale image in it, you’ll be forced to have three copies of the same channel, which helps hitting that 4GB limit easier.

Thanks for the update, your commitment to engineering a high quality family of products is impressive. I have no doubt that the time you have all invested will be reflected once you launch. I can’t wait to take the beta for a spin. All the best to you and your team!

Thank you so much for taking the time to comment, Shawn! It hope one these will be of help to your day-to-day workflow when they are released.

This is going to be amazing! Very much looking forward to using the software! Are there any plans for a linux version or is it going to be windows only?

Hi Pascal, Thank you for taking the time to read the article! The early versions are going to be for Windows only but the program has been written with portability in mind. We’ll look into the possibility of porting it to Linux, though I’m still not sure how long it will be until we reach that stage.

Cheers!

looking forward to the baker tool ! I bought the first two libraries when they were released and they are great quality !

Hi! Baker is already released at https://friendlyshade.com/, you can also find more information about it on our forum. https://forum.friendlyshade.com/t/how-to-use-baker-cli/47

If you wish we can issue a free month trial so you can test it, I’d be happy to hear what you think.

Best,

Paola

paola@friendlyshade.com

Also would love mac versions of the softwares ! Is it in the pipelines ?

If there are enough requests for Mac users, it will be released in the next months. Currently, we’re only focusing on Windows 🙂